Who wrote this? (And why do you care?)

A version of a short talk given at Drake University on April 20, 2023

[ last time I visited my substack dashboard there was a button there prompting me to republish this specific essay and I accidentally clicked it, and it was done, re-shared. whoops. why did substack’s algorithms choose THIS piece to prompt, I wonder? ]

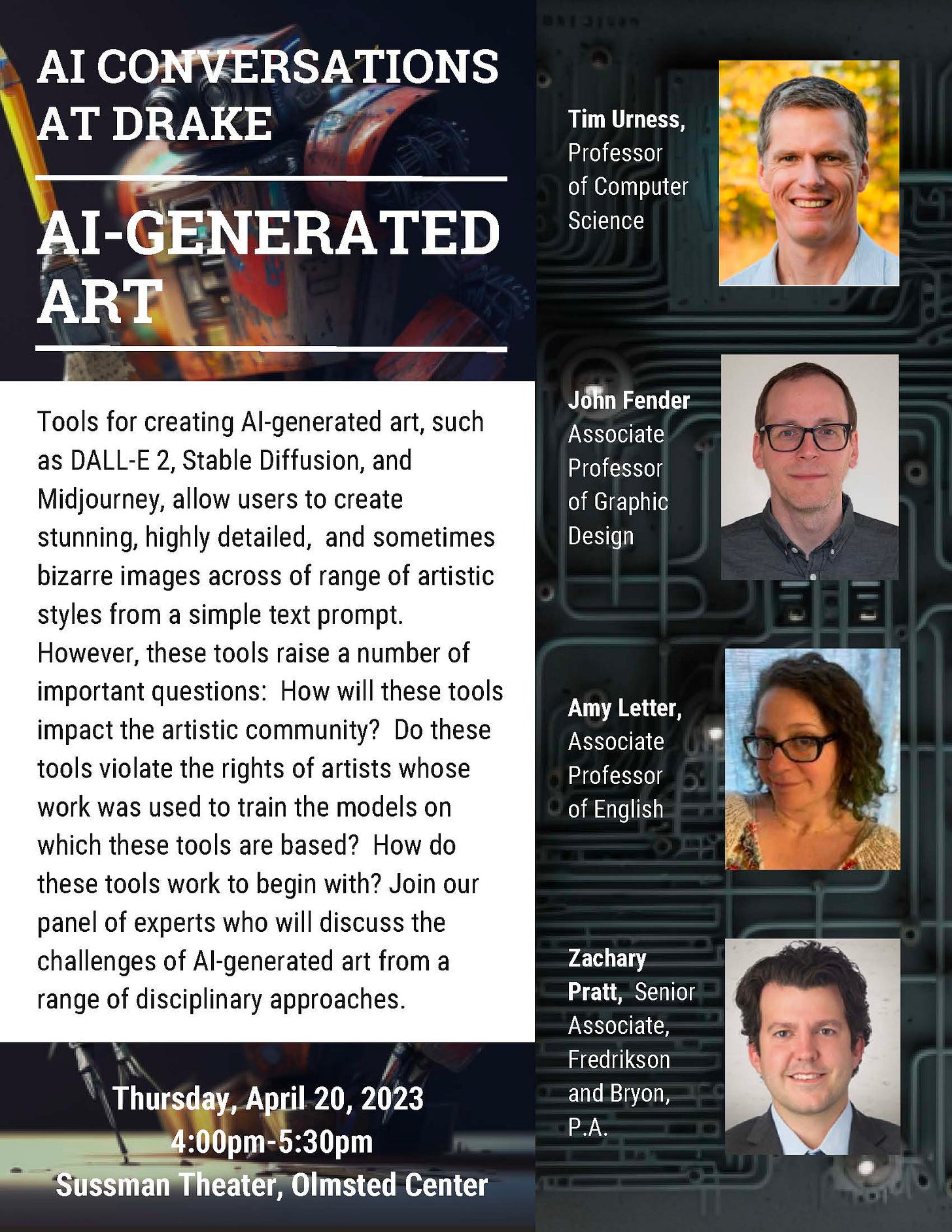

This week [ from April 2023 ] I was part of a panel presentation at my university that also featured two talented colleagues from Computer Science and Art and Design, and a lawyer who specializes in intellectual property

It was a really enjoyable panel, well attended with a great Q&A at the end, and I liked spending a few days thinking on the subject and preparing a presentation. My approach was not like an essay: to present, I had an outline of ideas I could expound on and relate to what the other speakers said — I find that works better for me when public speaking. But that means no well-thought-through written form exists, and so I wanted to write up a version of my presentation as an essay.

The focus of our discussion was AI and Art, and especially the advent of generative AI, which we ask to make a picture for us, and it does. I’m especially interested in the idea of authorship — not just ownership, but the added aura or information that comes with knowing a work was created by a certain person, in a certain place, at a certain time. I started by sharing these four copies I made of famous paintings. The audience was able to identify them all, even though they are very approximate copies.

Then I explained why I’d made these copies: they are all actually very small. Most of them smaller than a quarter. I wanted to see how tiny I could render a famous painting and have it remain identifiable. This was a challenge I set for myself. The question for my audience is, how does this information affect what you think of the images? Do they seem less terrible when you know they are tiny? Does the fact that they were a part of a challenge I’d set for myself change what they are, make them less like crappy imitations and more like something else?

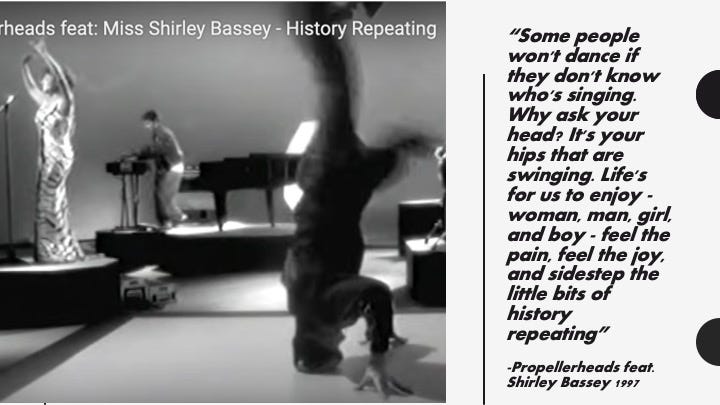

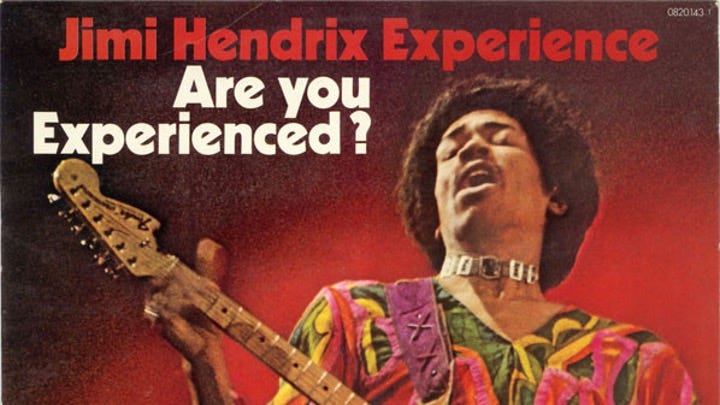

In the 1997 Propellerheads song “History Repeating,” Shirley Bassey sings these lines:

At the time the song was released, 1997, AI-generated music was not a possibility. The only thing these lines could be referring to is whether you’re hearing music sung by a famous person or a non-famous person. The song suggests you should listen to your hips: if your hips are swinging, don’t let your head stop you from enjoying the music. Today we have to hear these lyrics and consider the possibility that “we don’t know who’s singing” because the origins of works of art now includes a third option: made by machine. Do you want to ignore your head and follow your hips to the beat of a machine-sung song?

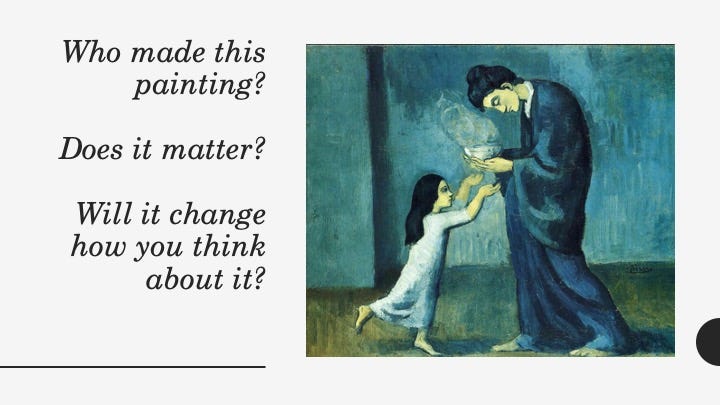

This is a foundational question about how we regard art: will you read a book, listen to a song, go to a show, if you don’t know “where it came from”? And even if you couldn’t tell me one thing about Pablo Picasso (who made the painting above), wouldn’t you be more likely to go see a showing of his work than a showing of the work of someone you’d never heard of? Wouldn’t you be more likely to accept and show on your wall a painting by Picasso than a similar work created by a relative unknown art student? And of course you must acknowledge that if the painting was “a Picasso,” that would inflate the amount of money at which it would be valued.

Legend has it that Picasso would dine at a restaurant and pay with a sketch he did at the table while eating. The restaurateur would be delighted because the monetary value of a quick sketch that Picasso crapped out between his langoustine and his beef bourguignon might equal the restaurant’s receipts for the night, or a week, or a month.

How come I can’t do that? How come you can’t do that? How come if I try to pick up girls, I get called an asshole, but he doesn’t? It’s almost like who you are matters in respect to what you can get away with, and how much your creations will be valued.

I asked an iPhone generative image app called Picsart to create an image of Drake’s landmark Old Main and our mascot Griff the bulldog in the style of Picasso’s blue period. It generated a lot of garbage showing varying interpretations of those words. My favorite is not the best, but the funniest: the one that shows Griff humping Old Main, which also has a dog-head, and which shows the blur of distorted water-markery in the middle, a sure sign that the image was generated from a dataset that included copyrighted images. It’s a perfect little disaster.

It’s hard to resist being on a panel about AI and art and not generate an image or two. Three of the four presenters at this event included different takes on an image of our event. These were mine:

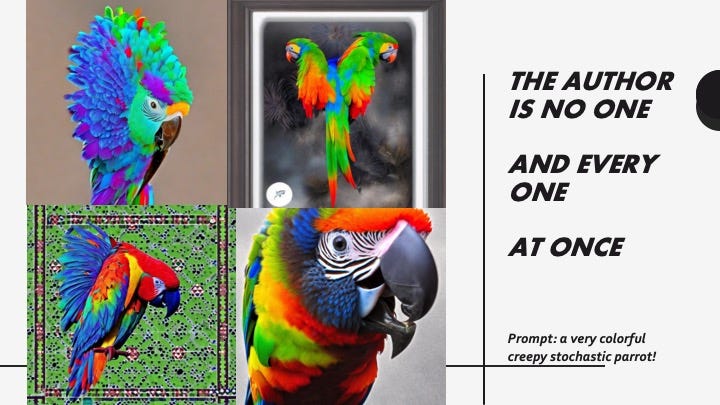

But it must be noted that I looked at several dozen images and selected these few because I liked them. The generative AI pumps stuff out, but it can’t know what you like or decide what’s good. It can just keep pumping. When someone shares one or a few generative AI created artworks with you, they’re probably showing you a curated sample, not a random sample. Here I asked the same app to provide me an image of a “very colorful creepy stochastic parrot.”

Unlike you or me or Pablo Picasso, a generative AI created visual work does not have a simple human source. Probably the most complex popular art object that comes close to this level of complexity is a feature length animation, like Encanto or The Mitchells vs. the Machines, where there is the director, and writers, and the corporation who funds the project, and the thousands of artists and engineers who are all necessary to the creation of the work, and all of the support staff who are necessary to the creation of their work. But at the end of the film, a long, long, long list of their names flows and flows: there are thanks to whole cities and countries, detailed credits for every song used. A generative AI credits no one, even though it might “borrow” from many, many more people, including you, if you’ve ever posted a picture online. It includes pretty much everyone.

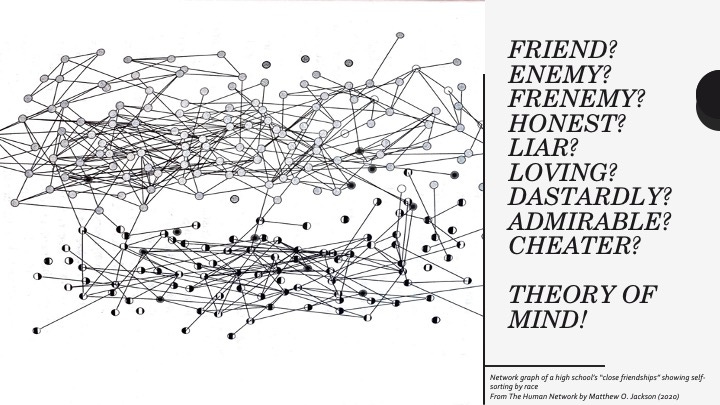

We humans live in social networks — not just online, but in real life too. And in those social networks, we have to be aware of who is whom — we have to know who knows what and who’s connected and popular — who can try to pick up girls and not get called an asshole — we are a social species; knowing these things is essential to our survival.

This is why theory of mind is not optional for human beings — it’s a survival skill. We have to understand the other people we interact with in our network as thinking minds, so we can understand and anticipate events. Generative AIs are frustrating to us, because they’re interacting with us in our network, seemingly contributing to its culture, but they have no actual identity. In this way, they are the ultimate bullshitters. HG Frankfurt’s essay On Bullshit is instructive here on a relatively minor point that it makes: when everyone involved is a willing and consenting participant in the bullshit, it’s actually a particularly fun, creative and lively form of conversation.

Yet if people are not willing participants in the “bull session,” they are instead just “being bullshitted”: they’re being deceived, disrespected. This raises the question of whether the AI generated image is acknowledged as such and is even presented alongside its prompt and with the name of the app as I’ve done here, and whether it’s presented uncredited, as I’ve done below, alongside a critical passage from On Bullshit that I hand-wrote out and photographed to include in this slideshow.

I’d like you to ask yourself if the quote feels any different, when you know that I took the time to hand-write it out, rather than copy-paste from the PDF. How we understand the origins of something matters. If we perceive its origins in laziness and corner-cutting, we’ll feel one way. If we perceive someone to have exerted a lot of effort, we’ll perceive another.

I think there’s a human version of this too; I’m a doodler, and no matter what else I’m doing, I’m very often also drawing (even though I’m positive I’ll never get a free dinner out of it!)… here are some doodles I made while in a meeting yesterday…

Low-effort little sketches of ideas are good for creativity. Every now and then something I doodle I like enough to photograph it and clean it up and use it in a substack post or something else. In this set I really like the bunny and the plant. I consider myself a serious artist whose medium is primarily words, but playing with images is really important to how I see myself too. If you’ve ever been on a Zoom call with me, you might recognize my avatar: it was part of the experiment I mentioned at the start, an attempt at a tiny Mona Lisa. It was the largest one I made, and the most cartoon-y. It felt like me, and I started using it as my face.

Marshall McLuhan might have argued that serious art is less about production and more about awareness:

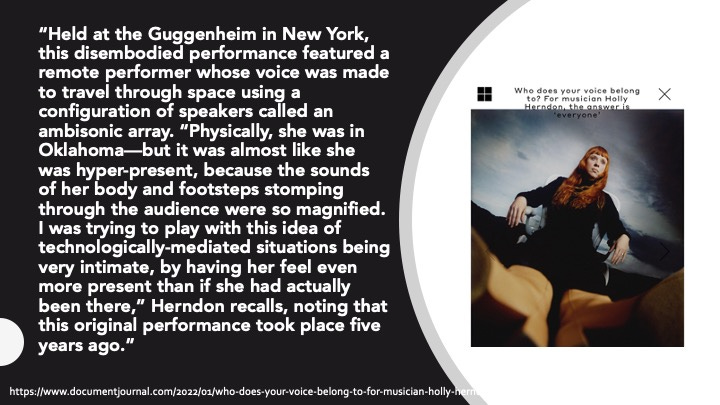

I thought of McLuhan when I read about Holly Herndon, a singer, musician and sound artist who works with AI. Here’s a description of one of her works:

This is what artists do: “play with this idea” — mess around with it and see what happens. Sometimes the result is very small and sometimes it is large, but small and large are both part of the play, the exploration. Holly Herndon has created an AI version of her voice — if you go to this website and upload an audio file, her AI-voice will sing it for you.

As she says on her site:

My voice is precious to me! It is 1 of 1 🥰

Voice Models, in combination with machine learning technology, already allow for anyone to clone a voice to generate music and media, and the opportunities and complications inherent to these techniques will only intensify!

This development raises novel questions about voice ownership that I think can be addressed by DAO governance 🤝

Right now “voice ownership” is a question our society is grappling with. Voice is intimately tied to identity, and AIs don’t have “identity”: they are every one and no one; but they can sound like anyone.

There’s a history in English verse of “Anonymous poetry.” This is probably the most famous:

Knowing how many authors turned out to be women after initially publishing their works anonymously (eg: Jane Austen) or under the names of men (eg: the Bronte sisters, who published as the Bell brothers), Virginia Woolf said:

A woman writing sounded absurd to the people of the past, and few would have condescended to read a book written by a woman, if they knew. Last week I suggested — hypothetically — the idea of assigning a book written by an AI to one of my students, and they flew immediately into a near-rage: “no no no!!” they said, “a machine does not live, it does not experience! I will not read a book written by a machine!”

Most of AI in Fiction uses machines as stand-ins for marginalized and subjugated people; and so if we are repelled by the idea of reading a book written by an AI, we have to ask ourselves if this is bigotry. At least as yet, I don’t think so: if you told me a chicken had learned to write and composed a novel, even if it were riddled with problems, I’d be interested in reading it. I would want to see what the world is like to a chicken. Because a chicken is alive; it lives and dies; it can be in only one place at one time. It’s brain may be tiny, it’s faculties limited, but it is more of an experiencing being than an AI.

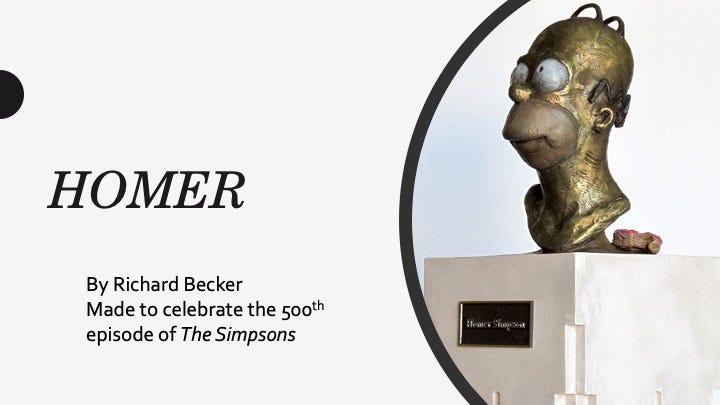

Maybe the AI will attain a being-ness, or we’ll imagine that it does (ELIZA effect). When there is no author, we like to invent one. Homer may or may not have existed, yet there are busts of his face in museums.

I prefer this bust though: it was made by a single human being named Richard Becker. It was made for the 500th episode celebration of The Simpsons. The people who worked on the show had a party and took their pictures with this bust of Homer.

Since I showed you two real busts of fictional people, I’d like to show you an AI-generated fictional bust of a fictional person:

Again, it generated many dozens of images, most of them unremarkable. I chose this one because it interested me. Right now, at least, people are still in control at the level of curation. When we use these tools, we assume the manager’s role.

Experiences matter; an AI could only attain wisdom, theoretically, by living and experiencing a limited life, in the way we do, or any animal does: it would have to be born, it would have to know that its life is finite and fragile, its ultimate death would have to be inevitable, and it would have to spend many long midnights alone with its fears, its hopes, it’s embarrassments, its pleasures.

That is when we will want to read its books or listen to its songs; when it’s at least as experienced as a chicken. Visual art is a little different though: sometimes we consider color and shape mere “decoration.” And so AI-generated art will filter into the low-stakes spaces of our lives.

Context matters: bullshit isn’t a problem if it’s play. Let us not be deceived. Value that which has emerged from the life, time, and mind of your fellow human beings.

Wow, Amy, brilliant stuff. So many complex, philosophical ideas to ponder.

Just this morning I read an epic essay by a whip-smart writer friend who discussed the idea that when we experience art, we by definition bring with us all of our past experiences of art too. For example, our experience of Picasso will be impacted by how much other art we've already consumed, especially how much surrealist art.

So, I wonder, will we be more comfortable with art constructed by AIs once we've already had the opportunity to do so? In other words, once it's not totally novel and terrifying, could it become normalized?

Again, very interesting piece! I'm going to take a stab at an AI riff soon. Need to get a lot smarter on the topic first (and in general).

I really loved reading this especially since I saw an earlier version of the slide show and we’ve talked about so many of these ideas recently but also for years. I’m also really glad your colleagues value your contributions in this area. I’m sure your students do as well.